Once upon a time, I worked on deep-embedded hardware that was locked down, air-gapped, and otherwise running the most minimal of minimal things that was available. It was also often the case that very old legacy Linux kernels and user-space tools were running in production.

On one of these systems in particular, the

ssh command was

available, tar as well,

some other busybox things, but not a whole lot else. In the way of file

transfer commands, there was very little. No scp or sftp; tftp may have

been available, but I'm sure there were reasons why I was unable to use it,

partly due to this being a large file and tftp transfer speeds being something

not worthy of praise.

In any case, this week, I struggled to get scp to do something, due a jump

host or something or other. Rather than figure out how to use scp the Right

Way™, I remembered fondly how I hacked together a file transfer solution, and

used that instead.

In retrospect, it seems so unnecessary, but it made me remember what I love about being a developer. We often take for granted our ability to solve problems with computers, but for every tool, every language, and every environment, we start out not knowing anything. Exploring the systems we interact with, understanding how they work, and challenging them to do new things, is what makes software development interesting. And with that, here's my hacky solution.

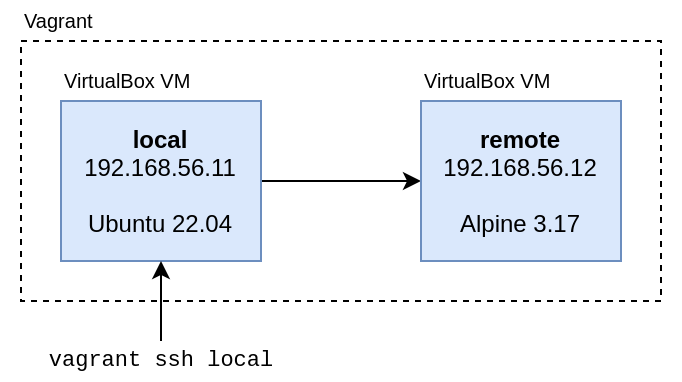

The test environment

Because I really want you to experience the feelings of desperation I felt at

the time, I've assembled a Vagrant development

environment that really captures the helplessness. It uses Vagrant's

Multi-Machine

feature to create

two machines on a private network with each other, so that we can ssh between

them:

-

The

localmachine uses Ubuntu to simulate a desktop workstation. -

The

remotemachine uses Alpine Linux, to simulate the experience of connecting to a remote embedded platform.

Here is the complete Vagrantfile for this environment:

# Vagrantfile

Vagrant.configure("2") do |config|

config.vm.define "local" do |local|

local.vm.box = "ubuntu/jammy64"

local.vm.network "private_network", ip: "192.168.56.11"

end

config.vm.define "remote" do |remote|

remote.vm.box = "generic/alpine317"

remote.vm.network "private_network", ip: "192.168.56.12"

end

end

With the Vagrantfile defined, we can spin up the environment with vagrant up:

vagrant up

Run it and wait for it to finish:

$ vagrant up

Bringing machine 'local' up with 'virtualbox' provider...

Bringing machine 'remote' up with 'virtualbox' provider...

==> local: Importing base box 'ubuntu/jammy64'...

==> local: Matching MAC address for NAT networking...

==> local: Checking if box 'ubuntu/jammy64' version '20230110.0.0' is up to date...

...

...

remote: Guest Additions Version: 7.0.2

remote: VirtualBox Version: 6.1

==> remote: Configuring and enabling network interfaces...

Once everything stabilizes, you can access the machine named local with

vagrant ssh:

vagrant ssh local

Beautiful, it works just like a real virtual machine:

$ vagrant ssh local

Welcome to Ubuntu 22.04.1 LTS (GNU/Linux 5.15.0-57-generic x86_64)

* Documentation: https://help.ubuntu.com

* Management: https://landscape.canonical.com

* Support: https://ubuntu.com/advantage

System information as of Thu Feb 16 06:19:11 UTC 2023

System load: 0.2392578125 Processes: 105

Usage of /: 3.6% of 38.70GB Users logged in: 0

Memory usage: 20% IPv4 address for enp0s3: 10.0.2.15

Swap usage: 0% IPv4 address for enp0s8: 192.168.56.11

0 updates can be applied immediately.

The list of available updates is more than a week old.

To check for new updates run: sudo apt update

vagrant@ubuntu-jammy:~$

From within the local machine, you can connect to the remote machine with:

ssh 192.168.56.12

The user / password for Vagrant boxes are almost always both vagrant, and

you'll need to approve the connection:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12

The authenticity of host '192.168.56.12 (192.168.56.12)' can't be established.

ED25519 key fingerprint is SHA256:5toSTfA74/jt7YQzd/RhU6ahKqyt1wckgQRTSIbomo0.

This key is not known by any other names

Are you sure you want to continue connecting (yes/no/[fingerprint])? yeet

Please type 'yes', 'no' or the fingerprint: yes

Warning: Permanently added '192.168.56.12' (ED25519) to the list of known hosts.

[email protected]'s password:

alpine317:~$

Create an SSH key

Normally, we'd be typing the password a lot:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12

[email protected]'s password:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12

[email protected]'s password:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12

[email protected]'s password:

If you don't want your life to be like this, you can create a quick SSH key on

local and add the public key to remote.

Create the SSH key like so:

ssh-keygen -t ed25519 -C "local"

The output will look something like this. You'll be prompted for a lot of information, but the defaults are fine (press enter a lot):

vagrant@ubuntu-jammy:~$ ssh-keygen -t ed25519 -C "local"

Generating public/private ed25519 key pair.

Enter file in which to save the key (/home/vagrant/.ssh/id_ed25519):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/vagrant/.ssh/id_ed25519

Your public key has been saved in /home/vagrant/.ssh/id_ed25519.pub

The key fingerprint is:

SHA256:4oSdBcH0jLa6VAgJE7RcuT5DjgyT6nuxF6PGQFlg7Os local

The key's randomart image is:

+--[ED25519 256]--+

|*=...o+. |

|o+o+ .= |

|.+= . o + |

|++ + = + |

|=.* o B S |

|.= * B . |

|o o O + |

| E B o |

| .+ o |

+----[SHA256]-----+

Copy the public key to remote with the

ssh-copy-id command:

ssh-copy-id 192.168.56.12

vagrant@ubuntu-jammy:~$ ssh-copy-id 192.168.56.12

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/home/vagrant/.ssh/id_ed25519.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

[email protected]'s password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh '192.168.56.12'"

and check to make sure that only the key(s) you wanted were added.

And now, happiness at last. You don't need to type your password:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12

alpine317:~$

With our test environment set up, we can start digging into what the ssh

command can do. It is a powerful tool that can do a lot more than just log in to

machines.

Insight #1: ssh can run commands

The first insight is that, with a few command line switches, ssh can be run

non-interactively. That means it can be told to open a session on a remote

machine, run some commands there, and exit without further interaction.

Try adding a command to the end of your ssh command:

ssh 192.168.56.12 'echo hi there'

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 'echo hi there'

hi there

What happened above is that ssh ran echo on the remote machine, not the

local one. Let's try doing something else, like check the available RAM:

ssh 192.168.56.12 'free -m'

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 'free -m'

total used free shared buff/cache available

Mem: 1990 71 1857 0 62 1814

Swap: 0 0 0

You could even specify multiple lines of commands using shell heredocs:

ssh 192.168.56.12 <<EOF

> echo hi there

> uname -a

> free -m

> EOF

Congratulations, you've just unlocked a free, terrible, single-node version of Ansible:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 <<EOF

> echo hi there

> uname -a

> free -m

> EOF

Pseudo-terminal will not be allocated because stdin is not a terminal.

hi there

Linux alpine317.localdomain 5.15.90-0-virt #1-Alpine SMP Wed, 25 Jan 2023 08:18:30 +0000 x86_64 Linux

total used free shared buff/cache available

Mem: 1990 71 1857 0 62 1814

Swap: 0 0 0

Insight #2: ssh opens the shell

You may not have realized it, but being able to pass a bunch of commands in one

go is a good indication that ssh opens a shell to run its commands.

This makes a lot of sense though, as the default behavior is to open a remote interactive shell. You could expect that the individual commands would run in the same context, if briefly.

Interestingly enough, it looks like ssh is even smarter than that. It appears

to open a shell only if needed, for example, to run a multi-line script or use

pipes and redirection.

This is easy to confirm. When I run ps by itself, no shell is spawned. There

will be no output because bash is not running on the machine when ps is

run:

ssh 192.168.56.12 'ps' | grep bash

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 'ps' | grep bash

When I run the grep command on the remote machine instead, ssh opens a

shell to do it:

ssh 192.168.56.12 'ps | grep bash'

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 'ps | grep bash'

2610 vagrant 0:00 bash -c ps | grep bash

2612 vagrant 0:00 grep bash

If ssh uses the shell, then there's one other feature it most likely supports.

Let's take a look.

Insight #3: ssh can forward stdin

Shell redirection works just fine with any command that supports it. You can bet

that ssh will do the right thing: send along what it receives on stdin and

pipe it to the command you ask it to run. Let's try sending the text hello to

the cat command on the remote machine:

echo 'hello' | ssh 192.168.56.12 'cat'

vagrant@ubuntu-jammy:~$ echo 'hello' | ssh 192.168.56.12 'cat'

hello

Even though we went to a separate machine to run cat, we still see an output

as if we had run it locally. This suggests that piping to the ssh command

could be used to transfer arbitrary data from one machine to another.

Transfer a single file

Since we already know that ssh will spawn a shell if we try to use shell

features, we can redirect the output of cat to disk on the remote machine.

Let's try cat-ing some text to a file:

echo 'some cool content' | ssh 192.168.56.12 'cat > file.txt'

vagrant@ubuntu-jammy:~$ echo 'some cool content' | ssh 192.168.56.12 'cat > file.txt'

Looks like no complaints, so let's run ls to see if the file exists:

ssh 192.168.56.12 'ls'

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 'ls'

file.txt

The file is there! But you may not trust this whole deal, so let's just log in for a round trip of proof:

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12

alpine317:~$ ls

file.txt

alpine317:~$ cat file.txt

some cool content

We wrote arbitrary text to a disk on the remote machine. If we redirected the

input from a file instead of echo, we'd have a complete file transfer. Let's

use our file.txt from before:

vagrant@ubuntu-jammy:~$ cat file.txt

some content for this file

A complete file transfer:

cat file.txt | ssh 192.168.56.12 'cat > file.txt'

vagrant@ubuntu-jammy:~$ cat file.txt | ssh 192.168.56.12 'cat > file.txt'

vagrant@ubuntu-jammy:~$ ssh 192.168.56.12 'cat file.txt'

some content for this file

It works! Hot dog!

You can go the other way by reversing the command:

ssh 192.168.56.12 'cat file.txt' | cat > file.txt

Transfer many files

Let's say that I've downloaded a copy of the OpenSSH source:

git clone https://github.com/openssh/openssh-portable.git

vagrant@ubuntu-jammy:~$ git clone https://github.com/openssh/openssh-portable.git

Cloning into 'openssh-portable'...

remote: Enumerating objects: 64386, done.

remote: Counting objects: 100% (398/398), done.

remote: Compressing objects: 100% (216/216), done.

remote: Total 64386 (delta 236), reused 322 (delta 182), pack-reused 63988

Receiving objects: 100% (64386/64386), 25.95 MiB | 6.80 MiB/s, done.

Resolving deltas: 100% (49628/49628), done.

Now I want that source on the remote machine for whatever reason; maybe I want

to try to build it with the remote machine's compiler. That's an awful lot of

files to copy over with cat. On the other hand, I could create a tar archive

and send that over. The theory is simple:

-

Compress all the files into a single data stream.

-

Pipe that data stream to the

sshcommand like we've shown withcat. -

Extract that data stream back into individual files on the other end.

The manual steps fit well into what we've already done:

# on the local machine

tar -czf archive.tgz openssh-portable/

cat archive.tgz | ssh 192.168.56.12 'cat > archive.tgz'

# on the remote machine

tar -xzf archive.tgz

But I don't want to run three commands; I just want one! This is very achievable

though, because tar supports writing archives to stdout and reading archives

from stdin.

Normally, when you use tar, you pass an archive to read or write with the -f flag, like below:

# reading

tar -xzf archive.tgz

# writing

tar -czf archive.tgz file.txt

If you use -f - instead of -f archive.tgz, tar will read archives from stdin and write

archives to stdout:

# reading

cat archive.tgz | tar -xzf -

# writing

tar -czf - file.txt | cat > archive.tgz

We can even create an archive of a file and immediately extract it in the same line:

tar -czf file.txt | tar -xzf -

We know from the previous section that adding ssh on either side will run

that side on a remote machine! Combine that with tar's ability to manipulate

multiple files, this will give us something of a universal file transfer

function:

tar -czf - openssh-portable/ | ssh 192.168.56.12 'tar -xzf -'

This totally works and is awesome! Move the ssh command to the other side, and

you can send files the other way:

ssh 192.168.56.12 'tar -czf - openssh-portable/' | tar -xzf -

With this, we've completely removed the single-file limitation of using cat.

You can transfer anything: a list of files, a directory, whatever you like!

Cleanup

If you were following along, there isn't much to clean up. Just exit your test

environment and type vagrant destroy to kill the environment:

vagrant destroy

$ vagrant destroy -f

==> remote: Forcing shutdown of VM...

==> remote: Destroying VM and associated drives...

==> local: Forcing shutdown of VM...

==> local: Destroying VM and associated drives...

Conclusion

The Unix philosophy imparts a richness and composability to Unix environments. As this post hopefully demonstrated, so much so that, when core functionality is missing, you can probably get something working from whatever is left over.

It definitely pays to be familiar with the less common command line switches and supported use cases of some of the common tools we interact with on a daily basis. I am still discovering new things I can do with the same old Unix tools.